Killing a Bad (Arbitrage) Bot … To Save Its Owner

Following the previous white-hat hacks (1, 2), on contracts flagged by our analysis tools, today we’ll talk about another interesting contract. It’s hackable for about $80K, or rather its users are: the contract is just an enabler, having approvals from users and acting on their commands. However, a vulnerability in the enabler allows stealing all the users’ funds. (Of course, we have mitigated the vulnerability before posting the article.)

The vulnerable contract is a sophisticated arbitrage bot, with no source on Etherscan. Being an arbitrage bot, it’s not surprising that we were unable to identify either the contract owner/deployer or its users.

One may question whether we should have expended effort just to save an arbitrageur. However our mission is to secure the smart contract ecosystem - via our free contract-library service, research, consulting, and audits. Furthermore, arbitrage bots do have a legitimate function in the Ethereum space: the robustness of automated market makers (e.g., Uniswap) depends on the existence of bots. By having bots define a super-efficient trading market, price manipulators have no expected benefit from biasing a price: the bots will eat their profits. (Security guaranteed by the presence of relentless competition is an enormously cool element of the Ethereum ecosystem, in our book.)

Also, thankfully, this hack is a great learning opportunity. It showcases at least three interesting elements:

Lack of source code, or general security-by-obscurity, won’t save you for long in this space.

There is a rather surprising anti-pattern/bad smell in Solidity programming: the use of

this.function(...)instead of justfunction(...).It’s a lucky coincidence when an attack allows destroying the means of attack itself! In fact, it is the most benign mitigation possible, especially when trying to save someone who is trying to stay anonymous.

Following a Bad Smell

The enabler contract has no source code available. It is not even decompiled perfectly, with several low-level elements (e.g., use of memory) failing to be converted to high-level operations. Just as an example of the complexity, here is the key function for the attack and a crucial helper function (don’t pay too close attention yet - we’ll point you at specific lines later):

function 0xf080362c(uint256 varg0, uint256 varg1) public nonPayable {

require(msg.data.length - 4 >= 64);

require(varg1 <= 0xffffffffffffffff);

v0, v1 = 0x163d(4 + varg1, msg.data.length);

assert(v0 + 0xffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffff < v0);

v2 = 0x2225(v1, v1 + (v0 + 0xffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffff << 5));

v3 = v4 = 0x16b6(96 + v2, v2 + 128);

v5 = v6 = 0;

while (v5 < v0) {

if (varg0 % 100 >= 10) {

assert(v5 < v0);

v7 = 0x2225(v1, v1 + (v5 << 5));

v8 = 0x16b6(64 + v7, v7 + 96);

MEM[MEM[64]] = 0xdd62ed3e00000000000000000000000000000000000000000000000000000000;

v9 = 0x1cbe(4 + MEM[64], v8, this);

require((address(v3)).code.size);

v10 = address(v3).staticcall(MEM[(MEM[64]) len (v9 - MEM[64])], MEM[(MEM[64]) len 32]).gas(msg.gas);

if (v10) {

MEM[64] = MEM[64] + (RETURNDATASIZE() + 31 & ~0x1f);

v11 = 0x1a23(MEM[64], MEM[64] + RETURNDATASIZE());

if (v11 < 0x8000000000000000000000000000000000000000000000000000000000000000) {

0x1150(0, v8, address(v3));

0x1150(0xffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffff, v8, address(v3));

}

} else {

RETURNDATACOPY(0, 0, RETURNDATASIZE());

revert(0, RETURNDATASIZE());

}

}

assert(v5 < v0);

v12 = 0x2225(v1, v1 + (v5 << 5));

v13 = 0x16b6(v12, v12 + 32);

assert(v5 < v0);

v14 = 0x2225(v1, v1 + (v5 << 5));

v15 = 0x1a07(32 + v14, v14 + 64);

assert(v5 < v0);

v16 = 0x2225(v1, v1 + (v5 << 5));

v17 = 0x16b6(64 + v16, v16 + 96);

assert(v5 < v0);

v18 = 0x2225(v1, v1 + (v5 << 5));

v19 = 0x16b6(96 + v18, v18 + 128);

assert(v5 < v0);

v20 = 0x2225(v1, v1 + (v5 << 5));

v21, v22 = 0x21c2(v20, v20 + 128);

MEM[36 + MEM[64]] = address(v17);

MEM[36 + MEM[64] + 32] = address(v3);

MEM[36 + MEM[64] + 64] = v23;

MEM[36 + MEM[64] + 96] = address(v19);

MEM[36 + MEM[64] + 128] = 160;

v24 = 0x1bec(v22, v21, 36 + MEM[64] + 160);

MEM[MEM[64]] = v24 - MEM[64] + 0xffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffffe0;

MEM[64] = v24;

MEM[MEM[64] + 32] = v15 & 0xffffffff00000000000000000000000000000000000000000000000000000000 | 0xffffffffffffffffffffffffffffffffffffffffffffffffffffffff & MEM[MEM[64] + 32];

v25 = 0x1c7e(MEM[64], MEM[64]);

v26 = address(v13).delegatecall(MEM[(MEM[64]) len (v25 - MEM[64])], MEM[(MEM[64]) len 0]).gas(msg.gas);

if (RETURNDATASIZE() == 0) {

v27 = v28 = 96;

} else {

v27 = v29 = MEM[64];

MEM[v29] = RETURNDATASIZE();

RETURNDATACOPY(v29 + 32, 0, RETURNDATASIZE());

}

require(v26, 'delegatecall fail');

v23 = v30 = 0x1a23(32 + v27, 32 + v27 + MEM[v27]);

assert(v5 < v0);

v31 = 0x2225(v1, v1 + (v5 << 5));

v3 = v32 = 0x16b6(96 + v31, v31 + 128);

v5 += 1;

}

v33 = 0x20bd(MEM[64], v23);

return MEM[(MEM[64]) len (v33 - MEM[64])];

}

function 0x16b6(uint256 varg0, uint256 varg1) private {

require(varg1 - varg0 >= 32);

v0 = msg.data[varg0];

0x235c(v0); // no-op

return v0;

}

Key function decompiled. Unintelligible, right?

Faced with this kind of low-level complexity, one might be tempted to give up. However, there are many red flags. What we have in our hands is a publicly called function that performs absolutely no checks on who calls it. No msg.sender check, no checks to storage locations to establish the current state it’s called under, none of the common ways one would protect a sensitive piece of code.

And this code is not just sensitive, it is darn sensitive. It does a delegatecall (line 55) on an address that it gets from externally-supplied data (line 76)! Maybe this is worth a few hours of reverse engineering?

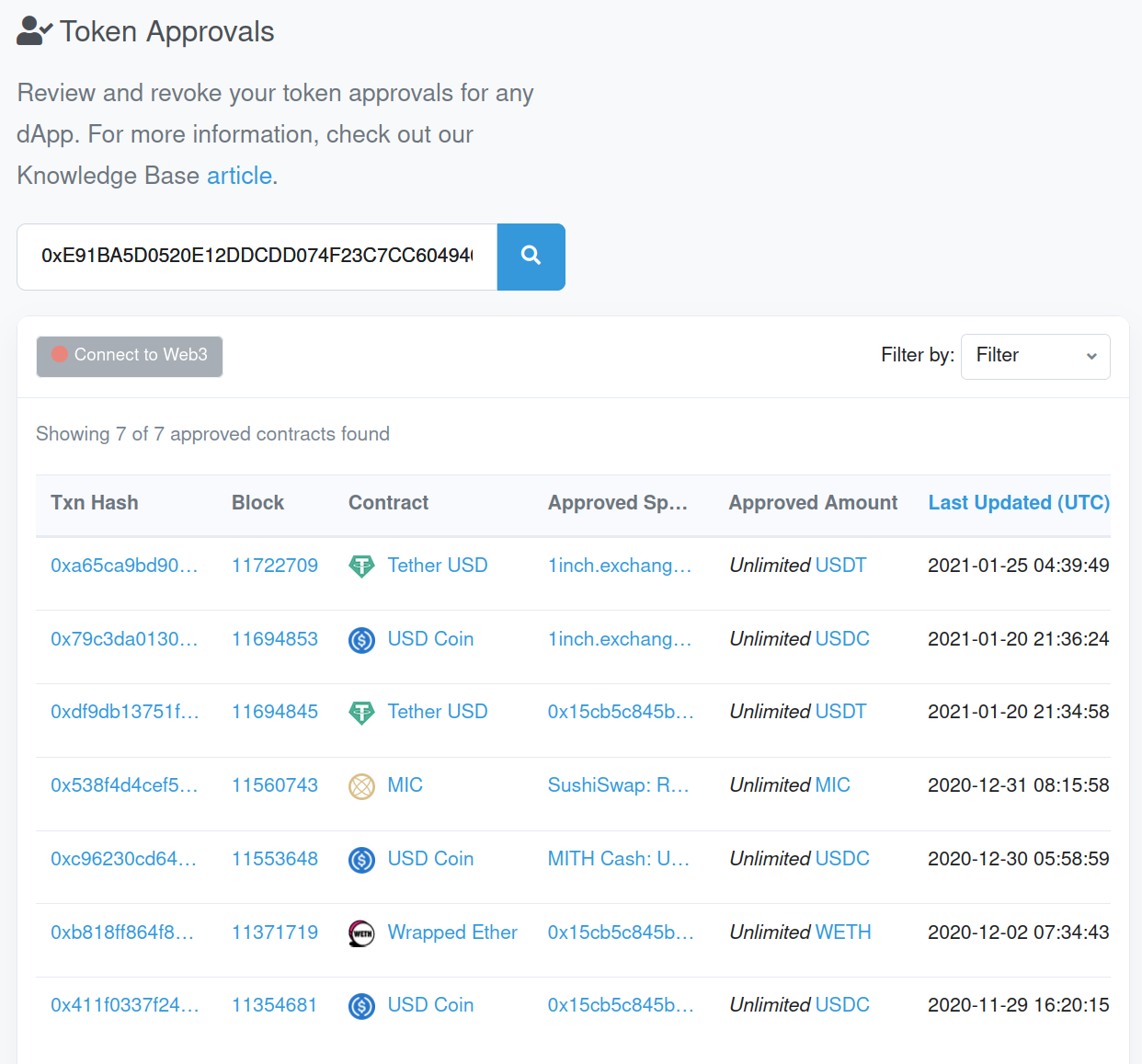

Vulnerable code in contracts is not rare, but most of these contracts are not used with real money. A query of token approvals and balances shows that this one is! There is a victim account that has approved the vulnerable enabler contract for all its USDT, all its WETH, and all its USDC.

And how much exactly is the victim’s USDT, USDC, and WETH? Around $77K at the time of the snapshot below.

Reverse Engineering

The above balances and suspicious code prompted us to do some manual reverse engineering. While also checking past transactions, the functionality of the vulnerable code was fairly easy to discern. At the end of our reverse-engineering session, here’s the massaged code that matters for the attack:

pragma experimental ABIEncoderV2;

contract VulnerableArbitrageBot is Ownable {

struct Trade {

address executorProxy;

address fromToken;

address toToken;

...

}

function performArbitrage(address initialToken, uint256 amt, ..., Trade[] trades memory) onlyOwner external {

...

IERC20(initialToken).transferFrom(address(this), amt);

...

this.performArbitrageInternal(..., trades); // notice the use of 'this'

}

function performArbitrageInternal(..., Trade[] trades memory) external {

Trade memory trade = trades[i];

for (uint i = 0; i < trades.length; i++) {

// ...

IERC20(trade.fromToken).approve(...);

// ...

trades[i].executorProxy.delegatecall(

abi.encodeWithSignature("trade(address,address...)", trade.fromToken, trade.toToken, ...)

);

}

}

}

interface TradeExecutor {

function trade(...) external returns (uint) {

}

contract UniswapExecutor is TradeExecutor {

function trade(address fromToken, address toToken, ... ) returns (uint) {

// perform trade

...

}

}

This function, 0xf080362c, or performArbitrageInternal as we chose to name it (since the hash has no publicly known reversal), is merely doing a series of trades, as instructed by its caller. Examining past transactions shows that the code is exploiting arbitrage opportunities.

Our enabler is an arbitrage bot and the victim account is the beneficiary of the arbitrage!

Since we did not fully reverse engineer the code, we cannot be sure what is the fatal flaw in the design. Did the programmers consider that the obscurity of bytecode-only deployment was enough protection? Did they make function 0xf080362c/performArbitrageInternal accidentally public? Is the attack prevented when this function is only called by others inside the contract?

We cannot be entirely sure, but we speculate that the function was accidentally made public. Reviewing the transactions that call 0xf080362c reveals that it is never called externally, only as an internal transaction from the contract to itself.

The function being unintentionally public is an excellent demonstration of a Solidity anti-pattern.

Whenever you see the code pattern<strong>this.function(...)</strong>in Solidity, you should double-check the code.

In most object-oriented languages, prepending this to a self-call is a good pattern. It just says that the programmer wants to be unambiguous as to the receiver object of the function call. In Solidity, however, a call of the form this.function() is an external call to one’s own functionality! The call starts an entirely new sub-transaction, suffers a significant gas penalty, etc. There are some legitimate reasons for this.function() calls, but nearly none when the function is defined locally and when it has side-effects.

Even worse, writing this.function() instead of just function() means that the function has to be public! It is not possible to call an internal function by writing this.function(), although just function() is fine.

This encourages making public something that probably was never intended to be.

The Operation

Armed with our reverse-engineered code, we could now put together attack parameters that would reach the delegatecall statement with our own callee. Once you reach a delegatecall, it’s game over! The callee gains full control of the contract’s identity and storage. It can do absolutely anything, including transferring the victim’s funds to an account of our choice.

But, of course, we don’t want to do that! We want to save the victim. And what’s the best way? Well, just destroy the means of attack, of course!

So, our actual attack does not involve the victim at all. We merely call selfdestruct on the enabler contract: the bot. The bot had no funds of its own, so nothing is lost by destroying it. To prevent re-deployment of an unfixed bot, we left a note on the Etherscan entry for the bot contract.

To really prevent deployment of a vulnerable bot, of course, one should commission the services of Dedaub. 🙂